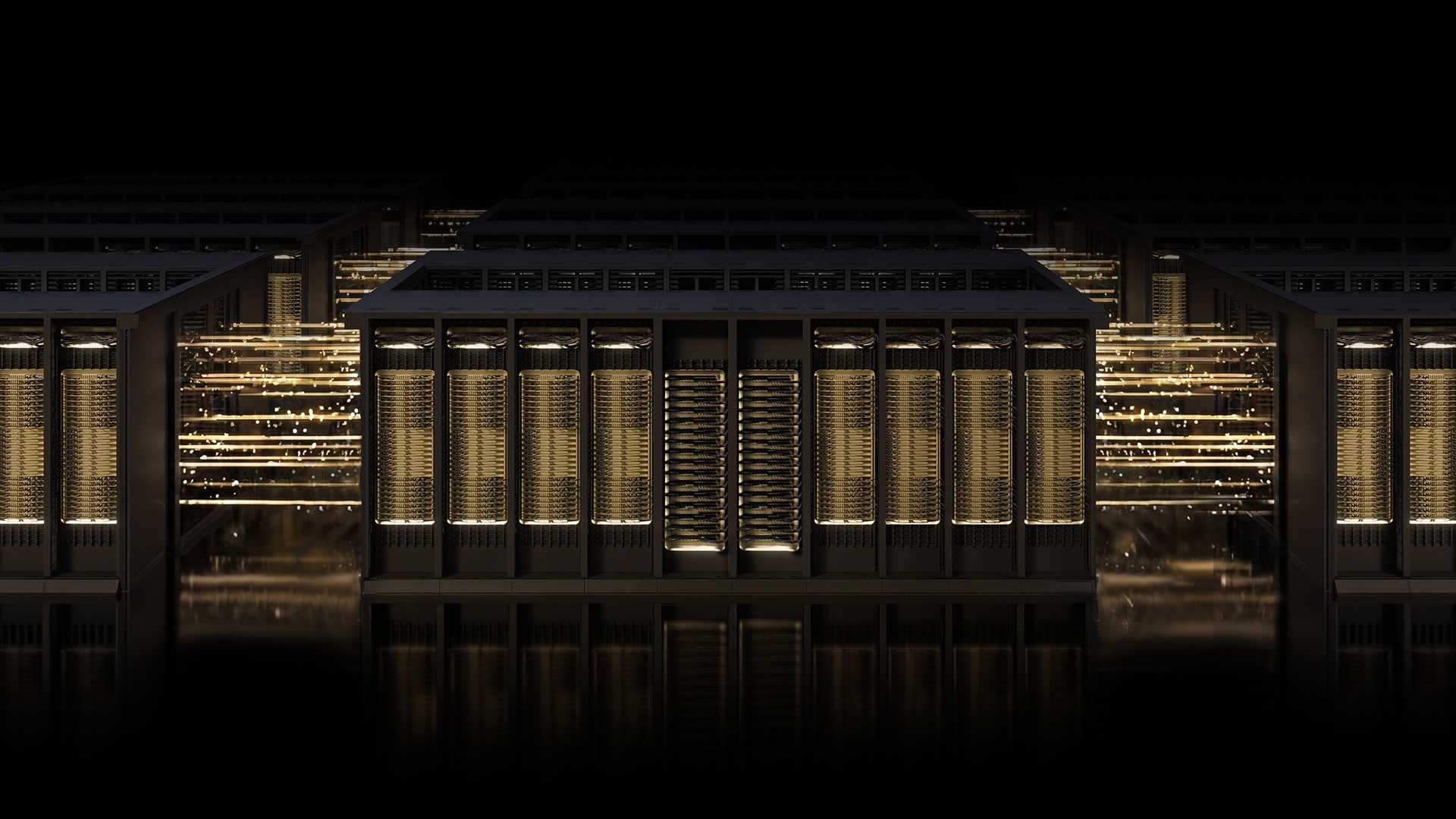

The race to build the world's most powerful AI factories demands networking that can keep pace with the ambitions of AI itself. NVIDIA Spectrum-X Ethernet scale-out infrastructure has emerged as the most advanced AI networking technology available today, deployed by industry leaders who cannot afford to compromise on performance, resilience, or scale. At the heart of this revolution is Multipath Reliable Connection (MRC), an RDMA transport protocol that reimagines data flow for gigascale AI. Here are 10 pivotal things you need to know about this game-changing technology.

1. The AI Factory Race

Artificial intelligence is pushing the boundaries of what computing can achieve. Training frontier models requires massive clusters of GPUs working in unison, and the network connecting them must be as advanced as the GPUs themselves. Traditional Ethernet fabrics struggle with the unique demands of AI workloads—low latency, high bandwidth, and zero tolerance for congestion. NVIDIA Spectrum-X was purpose-built to address these challenges, providing a foundation for the world's largest AI factories. It is not just about speed; it is about reliability at scale. As AI models grow to trillions of parameters, the network becomes a critical bottleneck. Spectrum-X removes that bottleneck, enabling researchers to focus on innovation rather than infrastructure.

2. Meet Spectrum-X: The Open, AI-Native Ethernet Fabric

NVIDIA Spectrum-X is not just another Ethernet solution—it is an AI-native fabric designed from the ground up for modern AI workloads. It combines purpose-built hardware, deep telemetry, and intelligent fabric control to deliver unparalleled performance. Unlike general-purpose networks, Spectrum-X is optimized for the unique traffic patterns of distributed training and inference. It supports massive scale, handling thousands of GPUs with minimal jitter and zero packet loss. The platform is also open, encouraging community innovation. This openness is critical for the AI ecosystem, allowing researchers and enterprises to customize their networking stacks without vendor lock-in. Spectrum-X sets the standard for what an AI network should be.

3. What is Multipath Reliable Connection (MRC)?

Multipath Reliable Connection (MRC) is an RDMA (Remote Direct Memory Access) transport protocol that represents a paradigm shift in AI networking. Traditionally, a single RDMA connection uses one network path, leading to bottlenecks and underutilization. MRC enables a single connection to spread its traffic across multiple paths simultaneously. This distributes the load, improves throughput, and enhances availability. MRC is the result of collaboration among NVIDIA, Microsoft, and OpenAI—three leaders in AI infrastructure. It was first proven in production on Spectrum-X hardware and has now been released as an open specification through the Open Compute Project, making it accessible to the entire industry.

4. The Road Grid Analogy: Why MRC Changes Everything

Think of traditional networking as a single-lane road connecting two towns. If there's an accident, traffic comes to a halt. MRC transforms this into a cleverly laid-out street grid system paired with a real-time traffic app. Instead of one road, there are multiple routes. The traffic app dynamically reroutes vehicles around slowdowns and closures. In networking terms, MRC uses all available paths to keep data flowing, even under heavy congestion. This analogy helps illustrate how MRC improves load balancing and resilience. It turns a fragile, single-path connection into a robust, multipath highway system that can adapt to changing conditions on the fly.

5. Industry Leaders Are Adopting MRC: OpenAI, Microsoft, and Oracle

When it comes to cutting-edge AI, the proof is in the deployment. OpenAI, Microsoft, and Oracle have all embraced MRC as a core component of their AI infrastructure. These organizations cannot afford downtime or performance degradation. They rely on MRC to ensure their massive training runs stay efficient. Microsoft's Fairwater data center and Oracle Cloud Infrastructure's Abilene facility—two of the largest AI factories built for training frontier LLMs—use MRC to meet their stringent requirements. The fact that these industry giants trust MRC signals its maturity and effectiveness. It is not just a theoretical concept; it is a proven solution running in production today.

6. OpenAI's Success Story: Efficiency at Scale

Sachin Katti, head of industrial compute at OpenAI, confirmed the impact of MRC: "Deploying MRC in the Blackwell generation was very successful and was made possible by a strong collaboration with NVIDIA. MRC's end-to-end approach enabled us to avoid much of the typical network-related slowdowns and interruptions and maintain the efficiency of frontier training runs at scale." This quote underscores how MRC directly addresses the pain points of large-scale AI training. Network slowdowns often cause GPU idle time, wasting compute resources and extending training time. MRC minimizes these issues, allowing OpenAI to push the boundaries of model size and complexity.

7. Microsoft and Oracle: Building the World's Largest AI Factories

Microsoft's Fairwater and Oracle's Abilene data centers are prime examples of gigascale AI factories. These facilities are purpose-built to train and deploy leading-edge frontier large language models (LLMs). They rely on NVIDIA Spectrum-X Ethernet and MRC to deliver performance, scale, and efficiency. The collaboration between Microsoft and NVIDIA, and Oracle and NVIDIA, has been ongoing for years, focusing on advancing AI infrastructure. At these massive facilities, every percentage point of GPU utilization matters. MRC ensures that the network never becomes the weak link, enabling these AI factories to operate at peak efficiency around the clock.

8. MRC Goes Open: The Open Compute Project

One of the most significant developments is the release of MRC as an open specification through the Open Compute Project (OCP). This move democratizes the technology, allowing any organization to implement MRC in their own networking stacks. The open specification ensures that the benefits of MRC—higher throughput, better load balancing, improved resilience—are not limited to NVIDIA hardware alone. It fosters an ecosystem of innovation, where third-party vendors and researchers can contribute to and improve the protocol. This openness aligns with the AI community's ethos of collaboration and shared progress.

9. Boosting GPU Utilization and Bandwidth

The primary goal of MRC is to maximize GPU utilization. In AI training, GPUs are the most expensive resource, and any idle time is costly. MRC achieves high GPU utilization by load-balancing traffic across all available paths in the network. This ensures every GPU gets the bandwidth it needs throughout a training run, even under heavy congestion. When congestion occurs, MRC dynamically avoids overloaded paths, rerouting data to less busy routes. This real-time adaptation sustains high bandwidth and prevents hotspots. The result is faster training times and lower total cost of ownership for AI infrastructure.

10. Resilience and Troubleshooting: Intelligent Recovery and Visibility

Data loss is inevitable in any large network, but MRC minimizes its impact. When packet loss occurs, MRC employs intelligent retransmission that enables rapid, precise recovery. Short-lived interruptions do not cascade into GPU idle time, protecting long-running jobs. Additionally, administrators gain fine-grained visibility and control over traffic paths. This simplifies operations and accelerates troubleshooting. With deep telemetry from Spectrum-X, operators can pinpoint issues quickly and optimize network performance. MRC's design emphasizes resilience, making AI training more predictable and robust.

The combination of NVIDIA Spectrum-X Ethernet and MRC represents a quantum leap in AI networking. From industry adoption to open specifications, this technology is setting the standard for gigascale AI. As the race to build more powerful AI factories continues, Spectrum-X and MRC will remain at the forefront, enabling breakthroughs that were previously unimaginable. The future of AI depends on networks that can keep up—and this is how we build them.