Breaking News: Claude Code Enters Auto Mode with Safety-First Design

Anthropic today launched auto mode for its Claude Code AI coding assistant, a feature that enables multi-step software development tasks to run with drastically reduced manual intervention. The system can autonomously plan, write, test, and debug code—but only after passing through layered safety checks and human approval gates for sensitive operations.

“We designed auto mode to push the boundaries of developer productivity while ensuring humans remain in control of critical decisions,” said Michael Gersten, Anthropic’s VP of Engineering, in a press briefing. The update targets teams already using Claude Code for pair programming, now allowing them to offload entire workflow sequences to the AI.

How Auto Mode Works

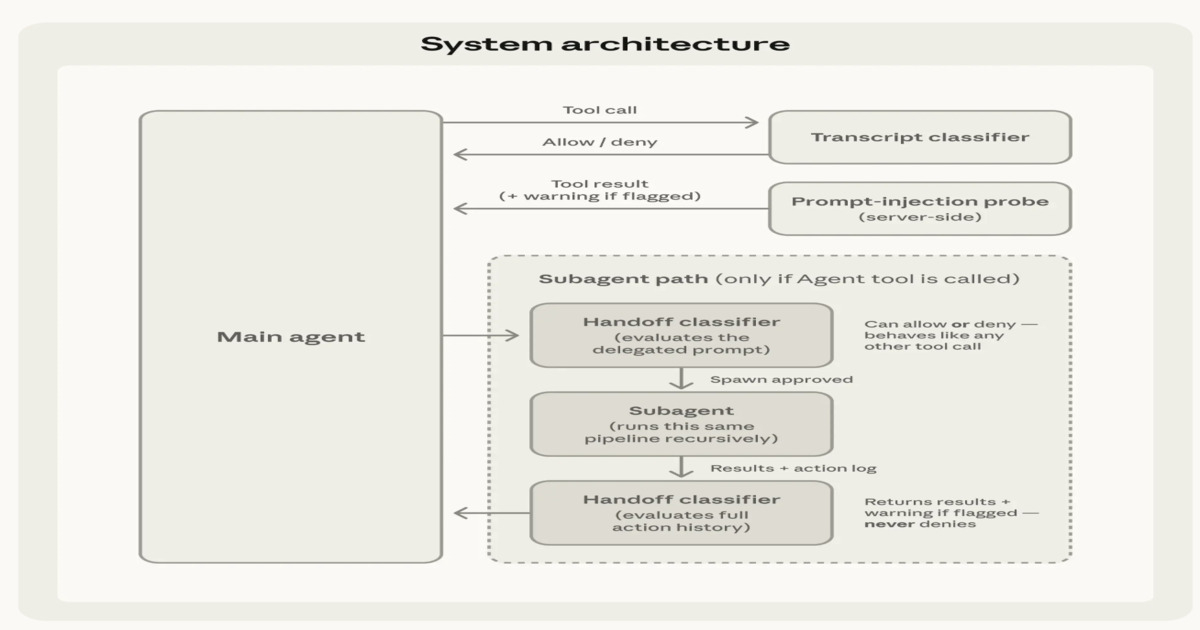

Auto mode operates through a pipeline of automated execution steps. The AI first filters incoming user requests to block malicious or non-coding prompts, then evaluates each proposed action—planning or coding—against a two-stage classification system that tags operations as low, medium, or high risk.

For high-risk actions, such as modifying production databases or deploying to live servers, the system pauses and requests human confirmation before proceeding. Lower-risk tasks, like refactoring internal functions or updating documentation, can execute automatically. This tiered approach aims to balance speed with security.

Safety Mechanisms at Every Step

Anthropic emphasized that auto mode is not a fully autonomous agent. “We specifically avoid giving Claude Code the ability to execute commands on its own without human checkpoints,” Gersten explained. “Our goal is a collaborative assistant, not a replacement for the developer.”

The safety stack includes input filtering to prevent prompt injection attacks, real-time action evaluation to detect risky patterns, and a two-stage classification system that assesses both intent and potential impact. Dr. Elena Torres, a cybersecurity analyst at SANS Institute, called the approach “a welcome departure from the ‘move fast and break things’ philosophy of earlier AI coding tools.”

Background: From Guided Assistance to Autonomous Workflows

Claude Code originally launched in early 2025 as a conversational coding partner that could edit files, run shell commands, and answer coding questions—but still required significant human prompting for each step. Auto mode builds on that foundation by chaining multiple commands together into a single session.

/presentations/game-vr-flat-screens/en/smallimage/thumbnail-1775637585504.jpg)

The feature has been tested internally with Anthropic’s own engineering teams and a small group of beta users since December. Early feedback indicated a 40% reduction in time spent on boilerplate tasks like writing tests or configuring CI/CD pipelines, according to company data shared with reporters.

What This Means for Developers and AI Safety

Auto mode could dramatically accelerate software development by letting AI handle routine coding grunt work while humans focus on architecture, security, and creative problem-solving. For startups and small teams, the tool may level the playing field against larger organizations with more developer resources.

However, experts caution that any autonomous coding system introduces new risks—especially around code quality, compliance, and unintended side effects. “If the AI modifies a function that dozens of other modules depend on, even a low-risk task can cascade into a deployment outage,” said Dr. Raj Patel, a software engineering professor at Stanford. “Anthropic’s human gates are necessary, but developers must still review every automated change.”

The launch comes amid growing industry debate on AI autonomy in critical infrastructure. Unlike competitors that offer open-ended code generation agents, Claude Code’s auto mode requires explicit permission for any action that could alter system state persistently. Whether that cautious approach will satisfy enterprise clients remains to be seen, but early analyst reports are positive.

Read more about Claude Code’s initial launch and safety features in our original coverage.